Markov Decision Process

Bellman Equation

Dynamic Programming

Model-free Prediction

Model-free Control

Value Function Approximation

Policy gradients

Actor-critic methods

In the reinforcement learning set up, we have a system with states and dynamics among states. Dynamics consist of probabilities for transitions among states and rewards for states. And, there is an agent who can take one of several possible actions in each state. The goal is for the agent to act at each point of time in such a way as to maximize cumulative reward.

Reinforcement Learning is mathematically modeled by a Markov Decision process (MDP).

Markov Decision Process

An MDP is formalized as a tuple , where

: set of states

: set of actions that can be taken on states

: a set of matrices where each matrix gives probabilities of transitions from one state to another (Markov property implies current state is good enough to indicate probability of transition to a subsequent state). Rather than one matrix, we need a set of matrices because probabilities of state transitions are dependent on actions taken on the states. So, each matrix in the set is indexed by an action. Thus, an element of a matrix for action

, denoted

, is the probability of transitioning to state

, when action

is taken in state

.

: This is a set of vectors of rewards(or, precisely, expectation of reward). Each vector gives reward out of a particular state. Thus, an element of vector for state

, denoted by

is the reward that is given for taking action

in state

.

: This is the discount factor that is multiplied to future rewards to determine the value of current state or an action in current state.

Goal of an MDP is to determine a decision policy which gives the optimal action to be taken in each given state so that eventually, you accumulate the maximum possible reward. We model

as a probability distribution denoted

to indicate the probability that the agent will take action

in state

.

A state-value function and an action-value function

are defined.

is the total expected reward considering all future possible using the policy

from the state

. That is, starting from state

, if we take the current and future actions according to policy

,

is the reward that we expect to accumulate given the dynamics (

) of the system. Likewise,

is the total expected reward considering all future possible using the policy

from the state

by taking action

now.

Bellman Equation

and

satisfy the Bellman equations as follows:

and

are related to each other as:

(Average of

over all actions

)

In matrix-vector form, we can write:

Here,

: a column vector indexed by state

. Each element of

is

.

: a column vector, where each element is

.

: a square matrix where rows and columns are indexed by states. An element of

is

.

Optimal value functions/optimal policy

Optimal state-value function is maximum state-value function over all policies:

.

Optimal action-value function is maximum action-value function over all policies:

.

Theorem: A policy called optimal policy exists that achieves optimal state-value function and optimal action-value function.

That is, for all

, and

for all

and

.

There could be multiple optimal policies for a system. But, all of them achieve and

.

Bellman Optimality equation

Given optimal state-value and action-value functions, we can have a particular deterministic optimal policy where:

For this particular optimal policy, the following equation holds:

.

This is called the Bellman optimality equation.

Note that, while the regular Bellman equation holds for any policy , the Bellman optimality equation (in addition to the regular Bellman equation) holds for this particular deterministic optimal policy. In fact, it is easy to derive the Bellman optimality equation from the Bellman equation given the probability distribution of this particular

.

Now, as given by the theorem in previous section, since and

, we can drop the

from the Bellman optimality equation and have:

Thus, Bellman optimality equation is an equation for optimal value functions.

Equivalently, Bellman optimality equation is also:

And,

The difference between the Bellman equation and the Bellman optimality equation is that the average factor is replaced by

.

Dynamic Programming

Two techniques from Dynamic Programming may be employed to figure out the optimal policy in the reinforcement learning set up.

The first one is called the policy iteration, while the second one is called value iteration.

Policy Iteration

Here, we start with an arbitrary policy . Say, the policy has uniform distribution – that is, we choose all actions with equal probability. For this policy, we initialize the state-value function for all states to an arbitrary value ,say

.

We then iterate through the Bellman equation as follows:

Or, matrix-vector form:

Here, is the iteration to refine the values of

. If we initialized,

to

, then we have

for all

.

When we iterate over , we will have

as

.

Once we thus obtain and

, we devise a new policy

that acts greedy on

. That is,

is such that:

Then again, we determine iteratively using the Bellman equation procedure above.

We continue this process iteratively of obtaining a new policy and evaluating its state-value function

, until the policies (their state value functions??) converge. The resultant policy will be (is deemed??) the optimal policy.

Value Iteration

In this method, we iterate directly using the Bellman optimality equation:

.

We again arbitrarily initialize , say, to

, and then use

iteratively to derive new values of .

Eventually, the process converges. That is, as , we will have

.

Model-free prediction

Dynamic programming enables us to determine the state-value and action-value functions given the dynamics (model) of the system. It does this by mathematically using the Bellman equations and plugging in the dynamics (rewards and probabilities).

If the model (rewards and probabilities) of the system is not known a priori, we can empirically estimate the value functions for a given policy. We do this by taking actions according to the given policy, and taking note of the state transitions and rewards. By making enough number of trials, we are able to converge to the value functions for the given policy.

Monte-Carlo learning

This applies to experiments which are run as episodes. Each episode terminates and next episode is independent of the current episode. As an example, when a board game is played, each new game constitutes a separate episode.

Given a policy, action is taken in each state according to the policy. For a state that is arrived at time

, return for a particular run through the termination of the episode is calculated:

Here, is the reward obtained by taking action in the state at time

.

Such returns are added for all the episodes during which the state is visited to obtain total return for the state:

And, number of episodes (or in an alternate method, number of visits??) that the state is visited is calculated.

Value of the state is estimated as mean return , since by law of large numbers

as

.

Note that running average return can calculated online (real-time) as the episodes are run instead of calculating it only after all episodes are completed as follows:

Or equivalently,

In practice in online learning scenario, rather than using for weighing the return

from current episode, a constant factor

with

is used. This leads to the formulation:

What is the reasoning? Rather than the average over all episodes, returns from recent episodes is given more weight than returns from old episodes. Returns from episodes are given weights that exponentially decrease with time.

Temporal-Difference (TD) learning

In contrast to Monte-Carlo learning, Temporal-Difference (TD) learning can learn the value function for non-episodic experiments.

In Monte-Carlo learning, we run through a complete episode, note the “real” return obtained through the end of the episode and accumulate these real returns to estimate the value of a state.

In TD learning, we do as follows:

- we initialize the value for each state.

- we run the experiment (according to the given policy) for a certain number of steps (not necessarily to the end of the episode or experiment). The number of steps

we run the experiment is identified as

-step TD (or TD(

), for short) learning.

- we note the reward obtained in these steps.

- We then use the Bellman equation to estimate the return for the remaining of the experiment. This estimated return is

. This estimated total return

is called TD target.

- We update

similar to online Monte-Carlo learning except that here, we use estimated return rather than the “real” return. That is, we update using:

. The quantity

is called TD error.

How do we determine in TD(

) learning? We don’t. In what is called TD(

) learning, we use geometric weighting of estimated returns of all steps to obtain:

Then, we update as:

Model-free control

Model-free prediction attempts to find state-value and/or action-value functions empirically (when the dynamics of the system are not known a priori) for a given policy. As opposed to this, model-free control attempts to find optimal value functions (state-value and/or action-value) for a system empirically across all policies.

For model-free control, we combine the ideas from both model-free prediction and dynamic programming. Like dynamic programming, we iterate over policies improving the policy each time. Like Monte-Carlo and TD methods from model-free prediction, we estimate the value empirically by taking actions and observing the rewards.

Here is the intuition how the ideas combine:

- start with an arbitrary policy

- take action based on the policy

- observe rewards, update value (based on Monte-carlo or TD method)

- once value function for policy is obtained, derive new policy that acts greedily on the value function

- iterate

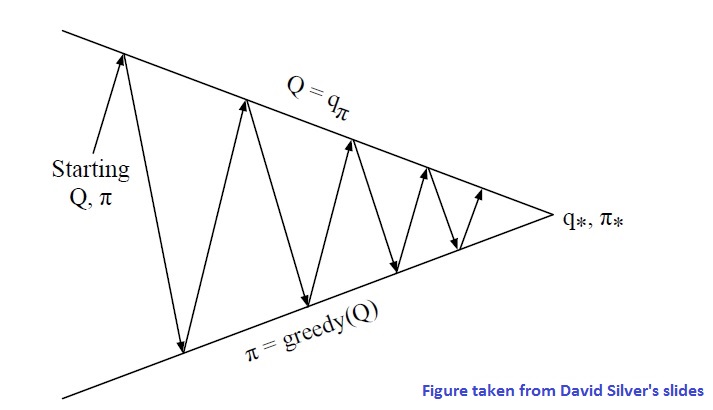

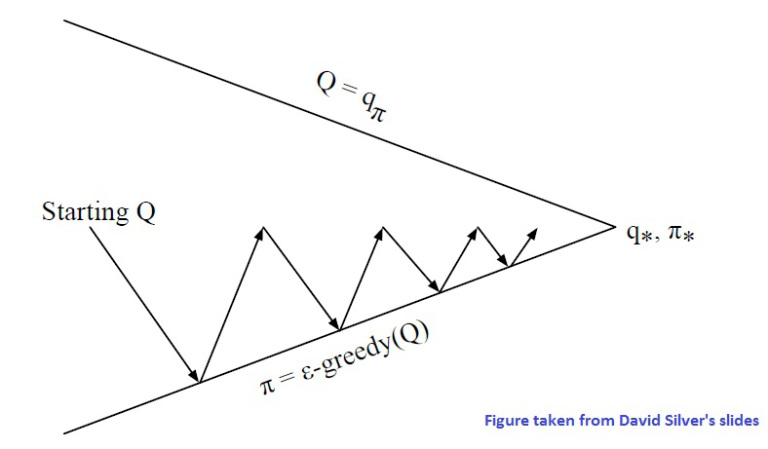

The following diagram illustrates the idea:

There are two points that need to be fixed in this setup:

- instead of using Monte-carlo or TD methods for state-value function estimation, use them to estimate action-value function. Knowing action-value function

allows to readily choose greedy action for a given state as in

.

- by acting greedily on the value function that is derived empirically (rather than full knowledge of dynamics), there is a chance some state or action has not been tried enough, and a potential reward is ignored. This brings the key idea of exploration. So, in deriving the new policy, we act greedily on the obtained value function with a certain probability, but also take a random action with some

probability. This is called

-greedy exploration.Thus, a new policy is derived as follows:

Then, to strike a balance between eventually converging to the optimal action-value function and exploration (GLIE idea), we could make decrease with time. This allows for exploring many states in the beginning, but eventually converging to the optimal policy.

For example, GLIE Monte-Carlo control algorithm is as follows:

- Initialize

arbitrarily

- Start off with an arbitrary policy

- Sample the policy for an episode

- For each state

and action

in the episode,

- Improve policy as follows:

- Iterate

Note that in the above, we did not wait till the convergence of the value function over several episodes for each policy before improving the policy. We modify the policy after each episode. This is typical (more efficient??) in practice. The following figures illustrates this note:

Next improvement idea is the TD idea from model-free prediction. Here, we update every time-step (rather than waiting till the end of the episode) using the estimated return from the Bellman equation. The algorithm gets modified as follows:

- Initialize

arbitrarily

- For current state

of system, take action

using

-greedy policy based on

- Observe reward

and subsequent state

from environment.

- Choose action

(do not take action yet) based on the same policy (

-greedy policy based on the same

function).

- Refine

function for

and

as

- Iterate the steps by taking

in state

The above algorithm is called Sarsa because of occurrence of letters in the update of

.

Then, similar to -step TD algorithm in model-free prediction, there is

-step Sarsa in model-free control. Here, we define

-step

-return as

and update as follows:

And, similar to TD() algorithm, we have Sarsa(

) algorithm where we define:

and we update as follows:

Value Function Approximation

Let us consider what states look like in some practical scenarios. For example, in a chess game, the state consists of layout of the board with pieces. In an Atari game, the state consists of history of a few frames of images going back from current time.

As can be seen, the number of states is enormous (in the Atari game, a state is a vector of as many dimensions as there are pixels in all frames combined). Say, each frame is 80×80 pixels, each pixel takes values 0-255 (gray scale intensities) and we need the previous four frames to represent current state adequately (position, velocity etc). This gives a state space that has a whopping .

So, if we apply the RL learning algorithms directly, it is impractical to arrive at function for all possible states (and actions) as the same state may not be seen enough number of times to be able to learn the

-value.

Further, two states may be similar mostly, but differing in some insignificant ways. For example, in a chess game, the position of a certain piece may be irrelevant given the current set up of the game. Inherently, -values may be dependent on certain features in the states.

To make reinforcement learning practical, we use function approximators to represent the -values for a given state and action. A function approximator is a function that approximates a target function. It is parameterized by a vector

such that for a certain value of

it becomes a close enough approximation of a target function. (Close enough approximation could mean small

distance between the approximator and the target function)

In prediction for a given policy , the target function is

. And, we come up with a function approximator

. The goal is to find

such that the objective (or cost) function

is minimized.

Assuming we know (as in supervised learning), we can apply stochastic gradient descent (SGD) to determine

. SGD samples (randomly chooses) the states and actions to determine the gradient. (Observe that the objective function involves summing over all the states and actions) By applying SGD with mini-batch size of just one sample (online learning??), we have that in each step of SGD, we adjust the weights with

.

In the above, we made one incorrect assumption, which is that we know . But, unlike supervised learning, we do not know the target function in reinforcement learning. In reinforcement learning, we use “presumed” targets:

- In the Monte-carlo method, we use

to get

- In TD(

) method, we use TD target

to get

- In TD(

) method, we use TD target

to get

(The presumed targets in TD methods are also dependent on . Shouldn’t gradient calculation consider the target to be function of

? This is a subtle point, and fixed

-targets (see below) treat the targets as fixed.)

In practice, say, we have the following sequence of state-action pairs in a realization of the policy: .

In each step, we update using one of the presumed targets using that one state-action pair. Recall, we do SGD with one sample of state-action pairs.

Does the stochastic gradient descent work when using presumed targets and highly correlated input state sequences? There are convergence issues that are addressed with batch method techniques like experience replay.

Example function approximators

An example type of function approximator is a linear function approximator. Here, if for each state-action pair , we are able to identify as set of features

to form a feature vector

then, we have a linear function approximator:

Another example type of function approximator is a deep neural network. A deep neural network is a non-linear function approximator. Deep Neural Networks have proven highly successful in feature extraction in images. In the context of reinforcement learning, we are not thinking of a two phase approach where a neural network is first used to identify specific features in the pair, and then mapping the features to

-values. Rather, the neural network automatically maps the states and action pairs to

-values, with its internal layers capturing any relevant features.

When we employ neural networks, we typically use a mini-batch of samples for SGD. We also employ the techniques of experience replay and fixed -targets for better convergence.

-learning is an off-policy variant of SARSA algorithm. Neural networks built to do

-learning are called Deep Q Networks (DQN).

With a DQN outputting , we follow an algorithm along the lines:

- Take action

according to

-greedy policy derived from the neural network output

- Store the tuple

in a replay memory

- Sample random mini-batch of tuples from the replay memory (experience replay)

- Compute presumed targets with respect to fixed parameters

(fixed

-targets)

- Minimize the objective function:

Do this by applying SGD on the mini-batch obtained from.

- Iterate

In the above, we use in the objective function, which is different from what we saw in the SARSA algorithm. The

comes from the update step of

-learning algorithm itself, namely

.

Observe that two actions need to be chosen in the algorithms (both SARSA and -learning) in each update step: (1) the action

used to determine which state-action pairs to update

-values for (2) the action

for calculation of

-value for next state. In both SARSA and

-learning,

-greedy policy chooses actions for (1) to allow enough exploration of state-action space. Convergence of algorithms is guaranteed theoretically (??) provided algorithms do exploration, which is why they employ

-greedy, rather than greedy, for (1). The difference between SARSA and

-learning comes in (2). SARSA is an on-policy algorithm, which means it uses the same

-greedy policy for choosing action in (2).

-learning is an off-policy algorithm, which means it chooses actions for (2) differently from how it chooses actions for (1).

-learning chooses actions for (2) using

(greedy, as opposed to

-greedy) operation.

-learning converges faster(This is my guess. Is this correct?) than SARSA because it chooses action greedily in (2) in estimating

-value on next state. Such estimation is a better (??) approximation to

-value than an estimate obtained by choosing an action

-greedily.

Policy gradients

In the above algorithms, we first determine the (optimal

) function. We then come up with a policy that acts

-greedy on this policy.

In algorithms based on policy gradients, we bypass the optimal value function and aim to get to an optimal policy directly.

One primary advantage of policy gradient based algorithms over value-function based algorithms is in the scenarios with continuous action spaces or many many (high-dimensional) discrete actions. When we have continuous action space, determining the maximum of function over all possible actions

is impractical. On the other hand, policy gradient approaches directly determine the optimum action for a given state.

In policy gradient approach, we parameterize a policy using parameters . And, we need an objective function to determine how good our parameterized policy is. Example objective functions are:

Average value objection function:

Average reward per time-step objective function: .

In the above, is the policy,

is the stationary probability of state

of Markov chain for policy

,

is the value function, and

is the reward obtained in state

when action

is taken.

An optimal policy is one with the highest value of objective function. To determine that maximizes the objective function, we use the stochastic gradient ascent algorithm. For the stochastic gradient descent algorithm, we first need to determine the gradients of the objective function.

Assuming average reward per time-step objective function, that is , we have:

In deriving the above, we used the following fact(?):

The expectation shown above is across all states and actions. The quantity whose expectation is taken is called the score function.

A policy gradient theorem generalizes the above to multi-step scenarios as follows:

An algorithm called Monte-Carlo policy gradient or REINFORCE can be used for episodic(only?) scenarios. The algorithm goes through episodes, and updates the policy by sampling the expectation (stochastic gradient ascent philosophy where rather than averaging across all states and actions, we consider one or a mini-batch of state-action pair(s)) and sampling the (Monte-Carlo philosophy where rather than computing

from a model, we empirically estimate it by observing rewards(?) from episodes).

The algorithm is as follows:

- Initialize

arbitrarily.

- Iterate through several(?) episodes

where action

in state

is determined by policy

.

- Iterate through timesteps

from

through

- Update

as

, where

is a unbiased(?) sample of

- Update

- Iterate through timesteps

Actor-critic methods

Monte-carlo method involves a high variance on , since we are just using the sample

(?). To alleviate this disadvantage, we have the Actor-critic methods which combine value-function and policy-gradient methods.

In the Actor-critic methods, there is a critic that approximates (and updates) the as

using parameters

and there is an actor that updates the policy using parameters

.

Thus, in actor-critic methods, we use an approximate policy gradient namely

We can estimate and update using the value-function based methods that we have seen earlier. Note that, we are not determining the optimal

, but we are just determining the

for the policy

, albeit a changing policy.

Here, we give a simple actor-critic algorithm where in is a linear function approximator that critic uses to approximate

.

The algorithm is as follows:

- Initialize

arbitrarily. Let the initial state be called

.

- Sample action

for state

according to policy

- Iterate

- Take action

- Observe reward

, and next state

- Sample action

for state

according to policy

- Take action

A variant of the above algorithm uses advantage function , which results in (?)

.